How It Works: Step-by-Step for Each AI Type

WorldSim doesn't feed directly into your model. It defines the macro environment that determines the statistical properties of your model's input population. Here's exactly how the connection works for each AI type.

Credit Scoring Model

How WorldSim connects:

WorldSim's role: defines the structured macro environment. The bank's existing stress testing infrastructure handles the macro-to-micro translation. WorldSim adds value by providing structurally coherent scenarios (not just "unemployment +5pp" in isolation, but the full coupled cascade), cross-country coverage (27 EU markets), and distributional output (P10/P50/P90, not just one stress point).

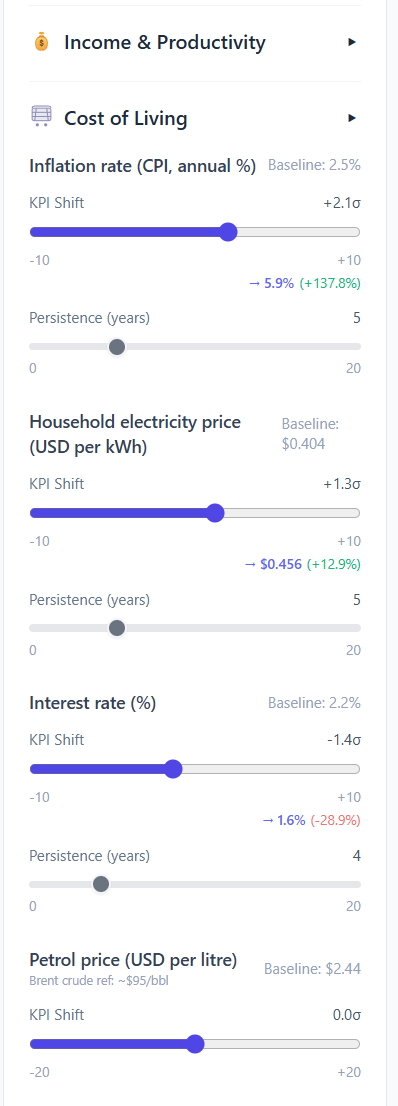

The bank configures macro tilts (inflation +2.1σ, electricity +1.3σ, rates -1.4σ) to define each stress scenario. Each tilt translates to real-world values shown in the sidebar.

AI Hiring / Recruitment Tool

How WorldSim connects (for the AI vendor or data science team building the tool):

GPAI / Foundation Model (Systemic Risk)

How WorldSim connects:

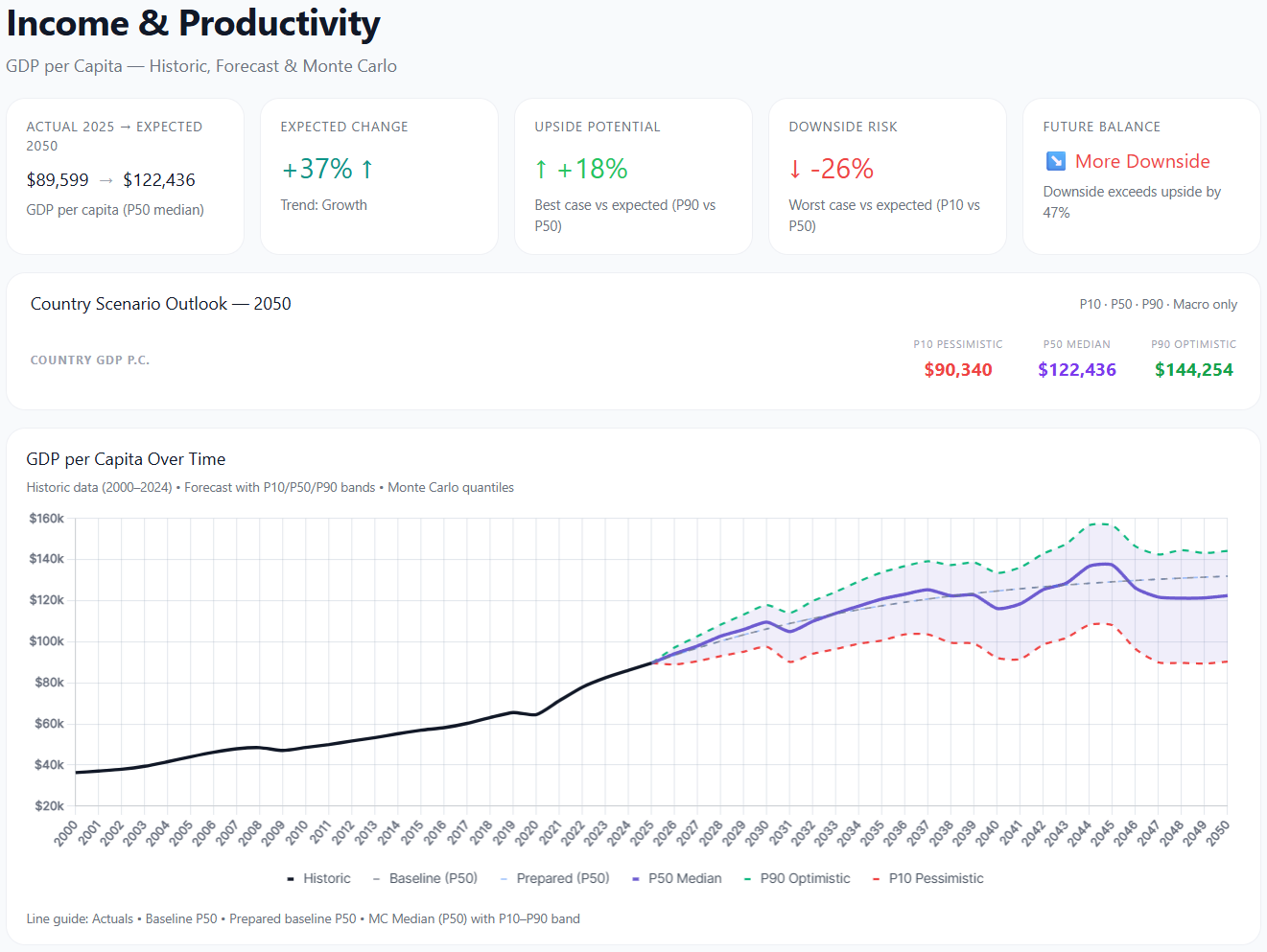

WorldSim produces full distributional outputs (P10/P50/P90) for every KPI. For GPAI systemic risk assessment, these distributions quantify the range of economic outcomes that AI-driven decisions could influence or amplify.